Analysis: Compute vs People” Isn’t the Real Problem.

It’s a Bigger Issue We’re Not Addressing

A recent post I shared on Meta’s layoffs struck more of a nerve than I had anticipated. The reaction suggested that this was not being viewed as an isolated corporate action, but as part of a broader pattern .

On reflection, it raises a wider question. There may be a gap emerging, not only within the economy, but in the way we govern and interpret the cumulative effects of artificial intelligence.

To understand this, it helps to look at where AI is already applying pressure, and what these shifts mean when viewed together.

The shift in how people are viewed

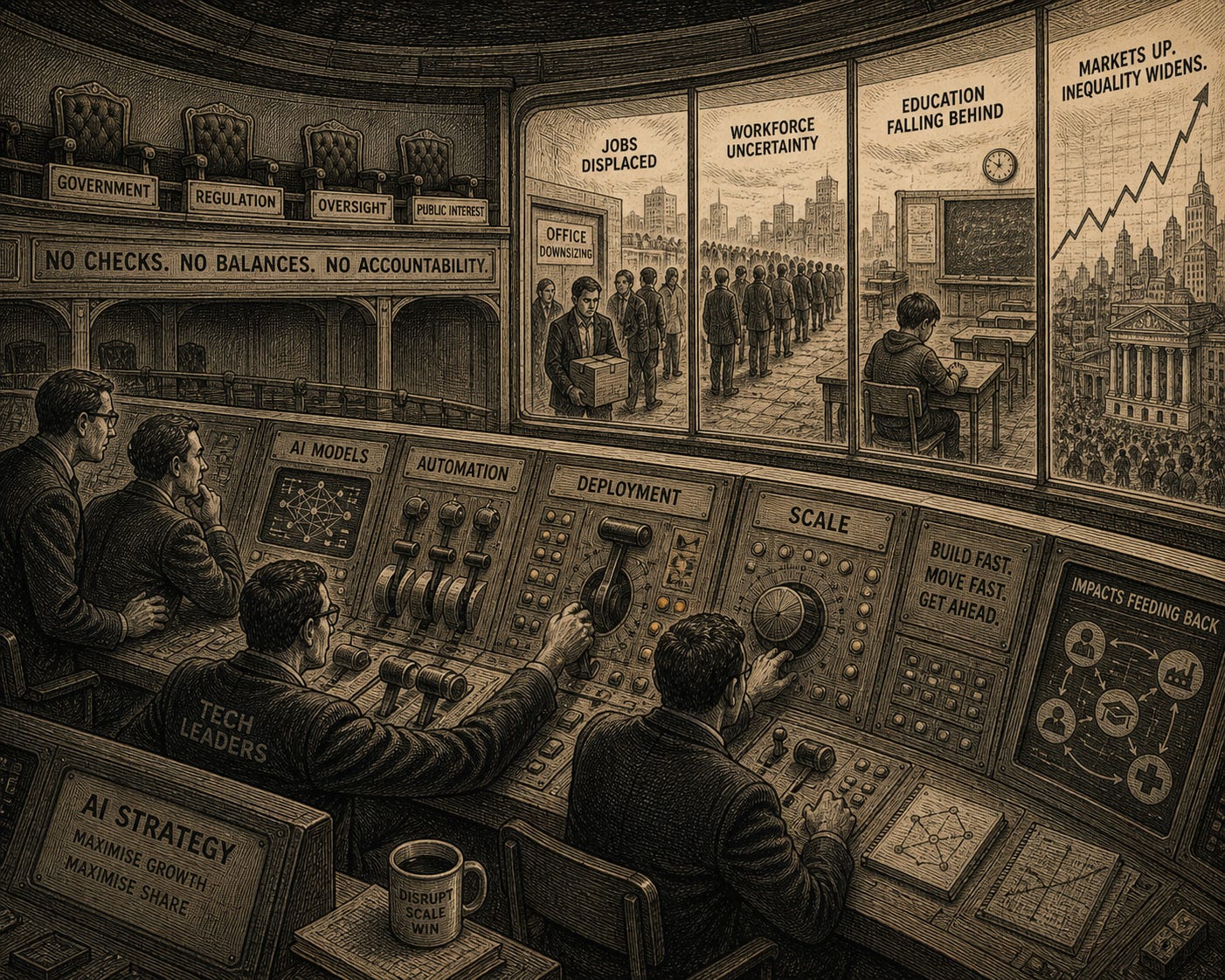

At the centre of this discussion is a changing perception of people within organisations, which was at the heart of my recent post. The consistent framing of AI as a mechanism for efficiency is increasingly being paired with a view of people as a cost to reduce.

This is emerging across industries, raising fundamental questions about how human value is defined in an AI-driven environment.

What is often overlooked, however, is that the very capabilities AI depends on, including the creation of meaningful data, remain human.

As organisations move to reduce cost, these capabilities risk being undervalued or removed entirely, not because they are no longer needed, but because decisions are still shaped by an outdated mindset that frames people and compute as a trade-off.

The result is a growing imbalance: the capabilities required to make AI effective are being diminished, while the systems themselves continue to scale.

At the same time, accountability for these decisions is lacking. Choices that reshape workforces, influence markets, and redefine value are being made at scale, without a clear line of responsibility for their broader consequences.

Education and skills

The implications extend into education and skills development, where systems are not evolving at the same pace as the technologies they are expected to support.

The way we teach remains largely structured around traditional models of learning, while the capabilities required for the world we are moving into are not being fully considered.

Many of the roles people are being prepared for today may not exist in the same form in the future. Education continues to reflect the world as it was, rather than the world as it is becoming.

While there is growing discussion around AI in education, there is far less clarity on what this means in practice.

The consequence is a widening disconnect between what is taught and what is needed, with long-term implications for employability, productivity, and social mobility.

Markets and the power of narrative

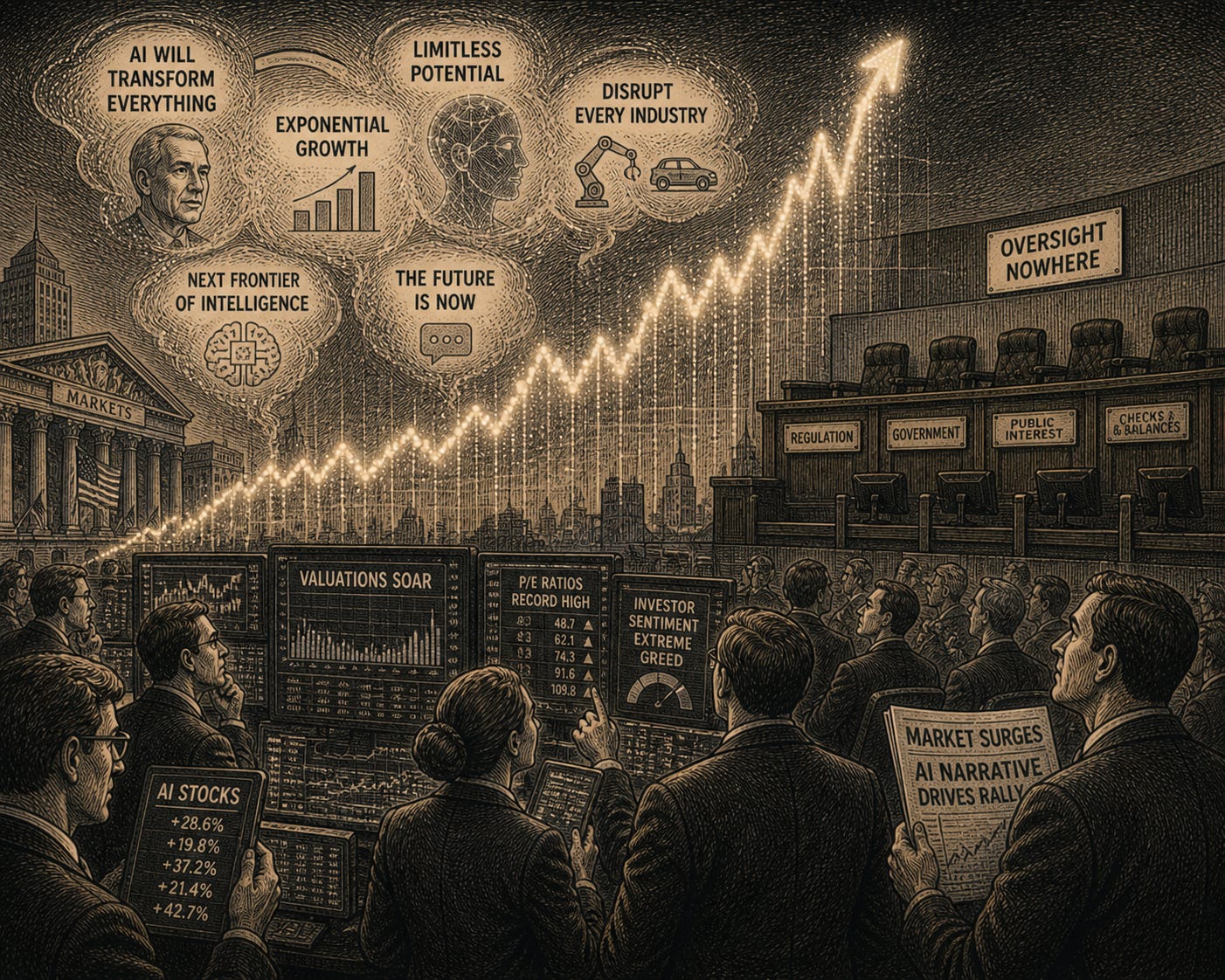

Markets are also responding to AI, not through traditional fundamentals, but narrative.

Valuations, capital allocation, and investor sentiment are increasingly influenced by new features and what AI companies say, rather than current performance.

This dynamic amplifies the influence of those shaping the narrative, particularly founders and technology leaders, whose projections can move markets and direct significant flows of capital.

This creates an environment where perception and expectation can shape outcomes ahead of tangible results.

A narrative without accountability

More importantly, some of the most influential voices in technology are making increasingly assertive claims about the future, particularly in relation to work, learning, and the role of people in an AI-driven world.

These claims suggest that large portions of work may disappear, that artificial intelligence will surpass human ability across many domains, and that the structures which have historically underpinned careers and economic participation will be fundamentally reshaped.

These are not marginal observations ; they go to the heart of how societies organise themselves and how individuals derive income, purpose, and identity.

Despite this, such assertions are often made without meaningful challenge or scrutiny, and without a clear framework for accountability.

In effect, narratives about the future are not only shaping expectations, but increasingly influencing real decisions, without a corresponding structure to evaluate consequences or hold those shaping them to account.

Decisions with wider economic consequences

There is also a broader issue, the lack of big-picture thinking and its implications for the economy as a whole.

Measures such as GDP, which are used to assess economic health, may begin to diverge from reality.

We could see a situation where productivity rises, yet unemployment remains high, creating a disconnect between reported growth and lived experience.

As workforce reductions accelerate, second-order effects begin to show.

Fewer people in employment impacts tax revenues, increases pressure on public finances, and feeds back into the same system organisations depend on.

What works at an organisational level can, at scale, begin to erode the broader economy.

The concentration of power

At the same time, there is an increasing concentration of power.

The development and deployment of advanced AI systems is largely concentrated within a relatively small number of organisations.

This extends influence beyond technology into markets, labour, and even policy.

While this has enabled rapid innovation, it also raises questions around balance, accountability, and how decisions affecting large parts of the economy are being shaped.

The gap

Taken together, these shifts point to something bigger.

It is reasonable to ask where governments sit within this.

Their focus tends to centre on safety, ethics, and responsible use. These are necessary, but they do not fully address the broader economic and societal implications.

Governments also lack the mechanisms to respond at the pace required.

Policy remains fragmented, with limited alignment on how to assess AI as a system-wide force rather than isolated issues.

The result is a growing divergence.

AI is being regulated in terms of its use, but not in terms of the decisions it enables, the trade-offs it introduces, or the system-level consequences that follow.

Implications

This divergence introduces a range of risks that extend beyond individual sectors. Misalignment between technological progress and economic structures can lead to an uneven distribution of value, labour displacement without clear pathways , and increased volatility driven by market expectations.

Over time, these pressures can compound, affecting growth, stability, and public trust.

The absence of a mechanism to assess and respond to these cumulative effects means that many of these risks may only become visible once they have already materialised.

This raises a broader question: do we need a dedicated layer that focuses specifically on AI , not only in terms of its use, but in terms of its wider economic and societal impact

Introducing a coordinating layer

If this is a structural gap, it suggests the need for a structural response.

It feels like we have reached a turning point.

A coordination layer, one that sits between technological innovation, market dynamics, and policy frameworks, with a singular focus on artificial intelligence and its system-wide implications.

Its purpose is not to regulate AI in isolation, but to understand and align how it is shaping the broader economy and society.

This body would focus exclusively on AI, bringing together relevant perspectives across a nation and ensuring that developments are considered as part of a coherent whole, rather than in isolation.

It would work alongside governments to help shape policy, while connecting fragmented areas of AI activity across institutions and domains.

This is not about limiting innovation. It is about recognising that when technology begins to influence the foundations of the economy, it requires coordination.

In effect, this layer acts as the connective tissue between the forces driving AI and the outcomes across society.

How it would operate

This layer would operate independently, with the ability to engage across all three domains.

With technology providers, it would establish clear expectations and guardrails around deployment at scale, particularly where decisions have societal impact, such as large-scale workforce changes or the release of transformative capabilities.

With markets, it would introduce greater transparency around the assumptions underpinning AI-driven valuations and narratives, helping to ensure that capital is allocated with a clearer understanding of risk and consequence.

With governments, it would act as a connective mechanism, providing a system-level view that informs policy beyond fragmented regulatory lenses, helping to ensure consistency across departments and alignment at a national level.

Its role would be to ensure that actions taken within one part of the system are understood in the context of their wider effects.

This includes visibility across employment, growth, education, and income, enabling a more complete view of AI’s impact.

At the same time, it would introduce a level of accountability .

When decisions or narratives with systemic consequences are advanced, there would be a mechanism to assess their impact, challenge underlying assumptions, and hold actors to account.

In essence, it would function as the glue across the system, ensuring consistency, transparency, and accountability, while putting in place the conditions required for all parts of the ecosystem to evolve and thrive.

Closing reflections

To allow AI, and the choices that shape it, to continue evolving along its current path without addressing the full extent of its impact is not neutrality, it is a decision.

The choice is becoming increasingly clear. We either continue to move in fragments, allowing technology, markets, and policy to drift apart, or we introduce the coordination required to align them before the consequences scale.

One path is reactive… the other is deliberate.

One of these paths will define the outcome for all of us.